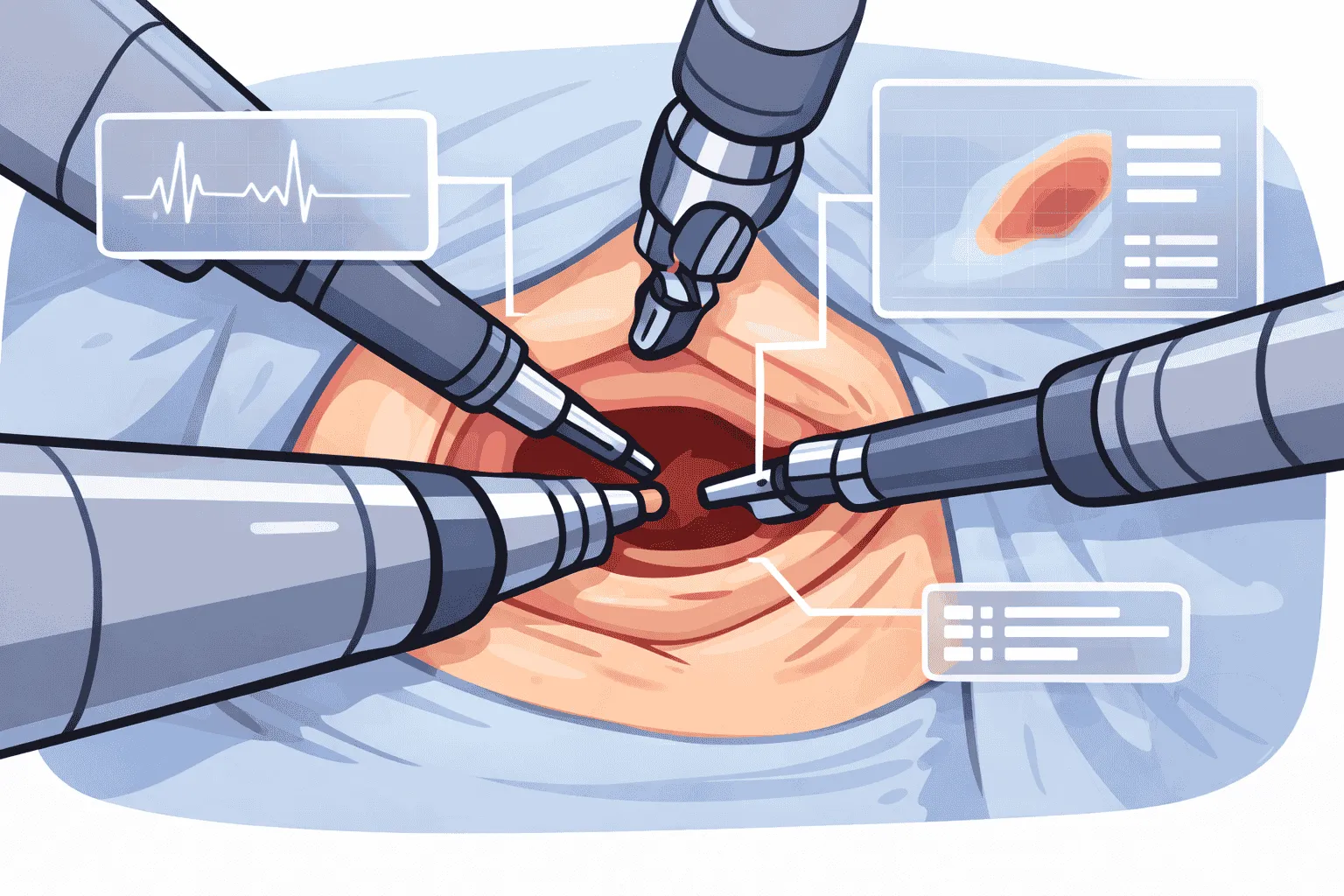

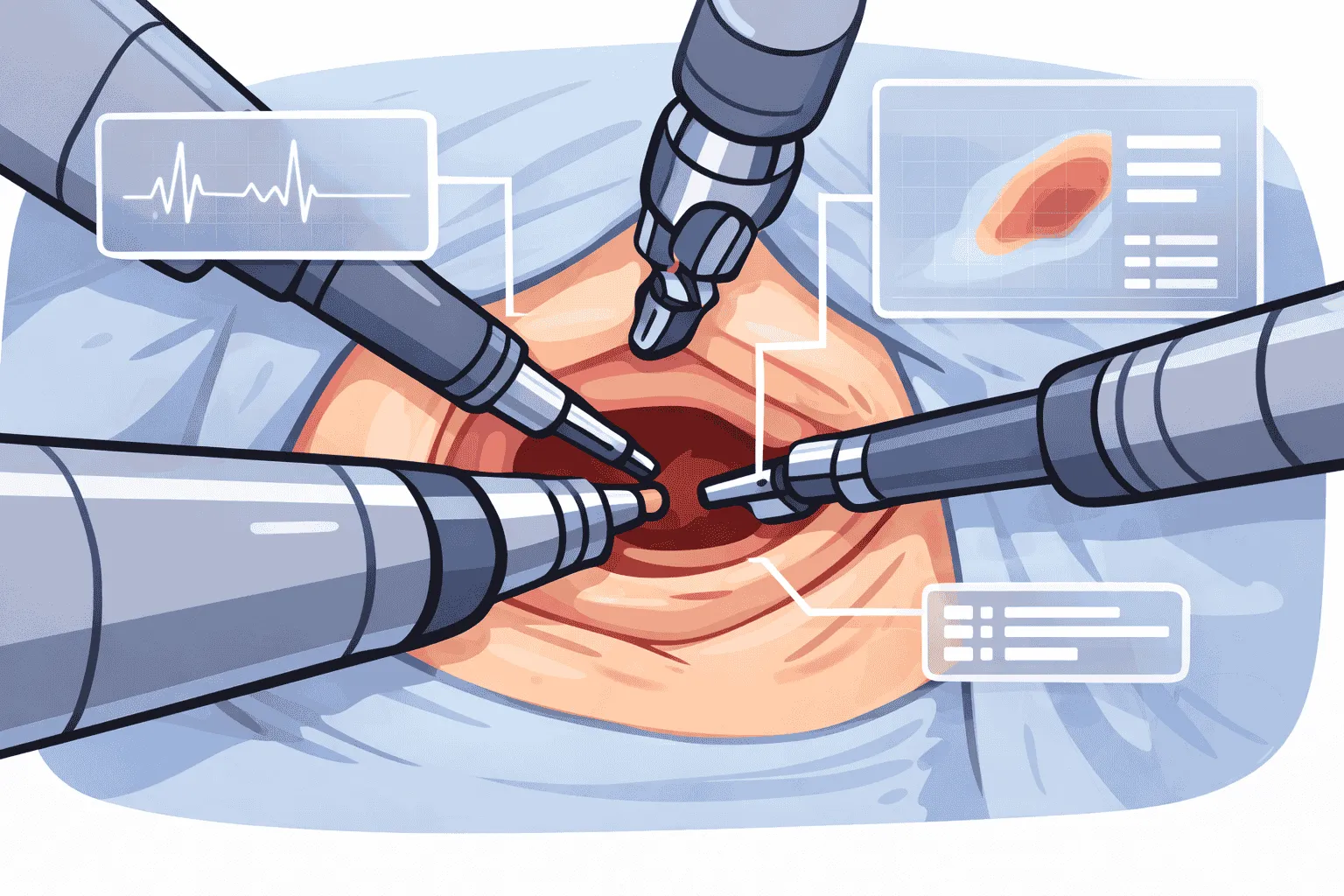

Critical Phase Anticipation

High-situation awareness that autonomously categorizes current operative steps and predicts sequential progression, optimizing OR workflow and keeping the entire team one step ahead.

High-situation awareness that autonomously categorizes current operative steps and predicts sequential progression, optimizing OR workflow and keeping the entire team one step ahead.

Three integrated stages convert continuous surgical video into precise phase labels and next-step predictions.

The live endoscopic feed is streamed frame-by-frame into the AI pipeline, where spatial and temporal features are extracted to capture the evolving state of the operative field.

A temporal deep-learning model assigns each moment of the procedure, from incision through closure, to a defined surgical phase, with confidence scores updated on every frame.

Using the classified phase sequence, the system predicts the upcoming operative step and broadcasts it to the OR display, letting the team prepare instruments, adjust lighting, and pre-position resources in advance.